APA 7: TWs Editor & ChatGPT. (2023, November 12). Scaling of Deep Learning Models in Chemistry Research. PerEXP Teamworks. [News Link]

A study conducted by researchers at the Massachusetts Institute of Technology (MIT) delved into the neural scaling dynamics of extensive DNN-based models designed to generate beneficial chemical compositions and comprehend interatomic potentials. Published in Nature Machine Intelligence, their research elucidates the rapid enhancement in the performance of these models as both their size and the volume of training data are expanded.

Nathan Frey, one of the researchers involved in the study, shared with Tech Xplore that their research drew significant inspiration from the paper titled ‘Scaling Laws for Neural Language Models’ by Kaplan et al. This particular paper demonstrated that augmenting the size of a neural network and the quantity of training data consistently results in improved model training. In response, Frey and his team aimed to explore the applicability of ‘neural scaling’ to models trained on chemistry data, specifically focusing on applications such as drug discovery.

Commencing in 2021, Nathan Frey and his collaborators initiated their research project predating the introduction of widely recognized AI-based platforms like ChatGPT and DALL-E 2. During this period, the anticipated upscaling of Deep Neural Networks (DNNs) was considered notably pertinent, especially in fields where studies examining their scaling in the physical or life sciences were relatively limited.

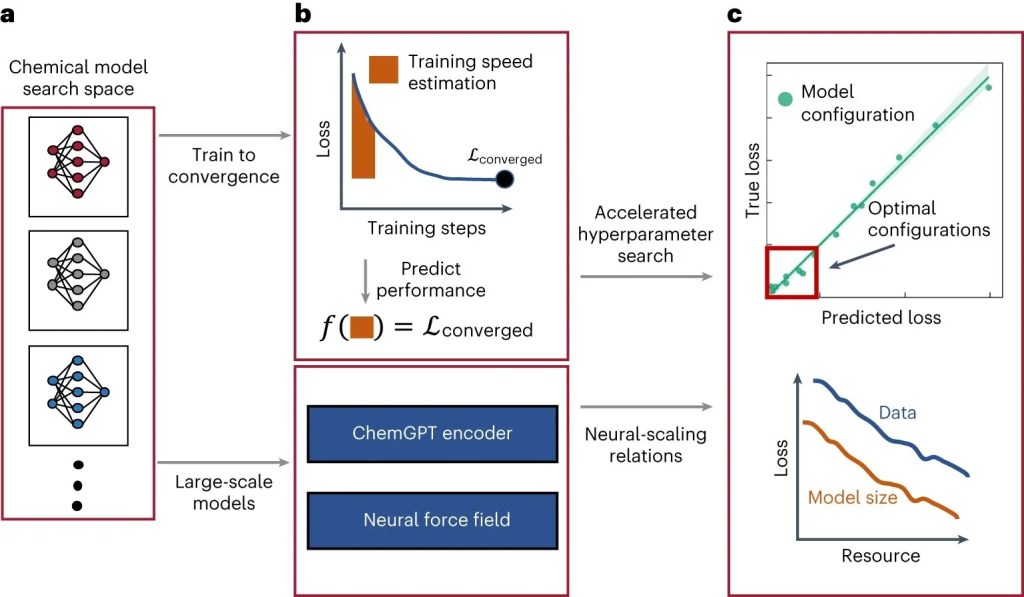

In their investigation, the researchers delve into the neural scaling dynamics of two distinct models designed for chemical data analysis: a Large Language Model (LLM) and a Graph Neural Network (GNN)-based model. These models serve distinct purposes, with the LLM focusing on generating chemical compositions, while the GNN-based model specializes in discerning the potentials between various atoms in chemical substances.

Nathan Frey clarified that their study involved the examination of two distinct models: firstly, an autoregressive language model fashioned after the GPT architecture, termed ‘ChemGPT,’ which was trained similarly to ChatGPT. In this case, ChemGPT was tasked with predicting the subsequent token in a string representing a molecule. Secondly, a family of Graph Neural Networks (GNNs) was employed, trained to predict the energy and forces associated with a molecule. The diverse functionalities of these models added depth to their exploration of neural scaling in chemical data analysis.

In their investigation into the scalability of the ChemGPT model and Graph Neural Networks (GNNs), Nathan Frey and his colleagues delved into the impact of both a model’s size and the scale of the dataset used for training across pertinent metrics. This comprehensive analysis enabled them to determine the rate at which these models enhance their performance with increasing size and exposure to more extensive datasets.

Nathan Frey conveyed that their research unearthed a phenomenon termed ‘neural scaling behavior’ in chemical models, akin to what is observed in Large Language Models (LLM) and vision models across diverse applications. Importantly, the study indicates that there is no apparent fundamental limit to scaling chemical models, suggesting ample room for further exploration with increased computational resources and larger datasets. Furthermore, the incorporation of physics into Graph Neural Networks (GNNs) through a concept known as ‘equivariance’ demonstrated a noteworthy enhancement in scaling efficiency. This finding is particularly significant, given the challenges in identifying algorithms that alter scaling behavior.

In essence, the research conducted by this team offers fresh insights into the capabilities of two AI models in the realm of chemistry research, unveiling the considerable enhancements in performance achievable through scaling. These findings not only pave the way for further investigations into the potential and avenues for improvement of these models but also hold promise for advancing other Deep Neural Network (DNN)-based techniques tailored for specific scientific applications.

Nathan Frey shared that following the initial presentation of their work, subsequent research has actively delved into exploring the capabilities and constraints of scaling for chemical models. Moreover, he highlighted his recent involvement in developing generative models for protein design and contemplating the influence of scaling on models tailored for biological data. These ongoing pursuits underscore the evolving landscape of research in AI applications within the field of chemistry and biology.

Resources

- NEWSPAPER Fadelli, I. & Tech Xplore. (2023, November 11). Study explores the scaling of deep learning models for chemistry research. Tech Xplore. [Tech Xplore]

- JOURNAL Frey, N. C., Soklaski, R., Axelrod, S., Samsi, S., Gómez‐Bombarelli, R., Coley, C. W., & Gadepally, V. (2023). Neural scaling of deep chemical models. Nature Machine Intelligence. [Nature Machine Intelligence]